TL;DR (Too Long; Didn’t Read)

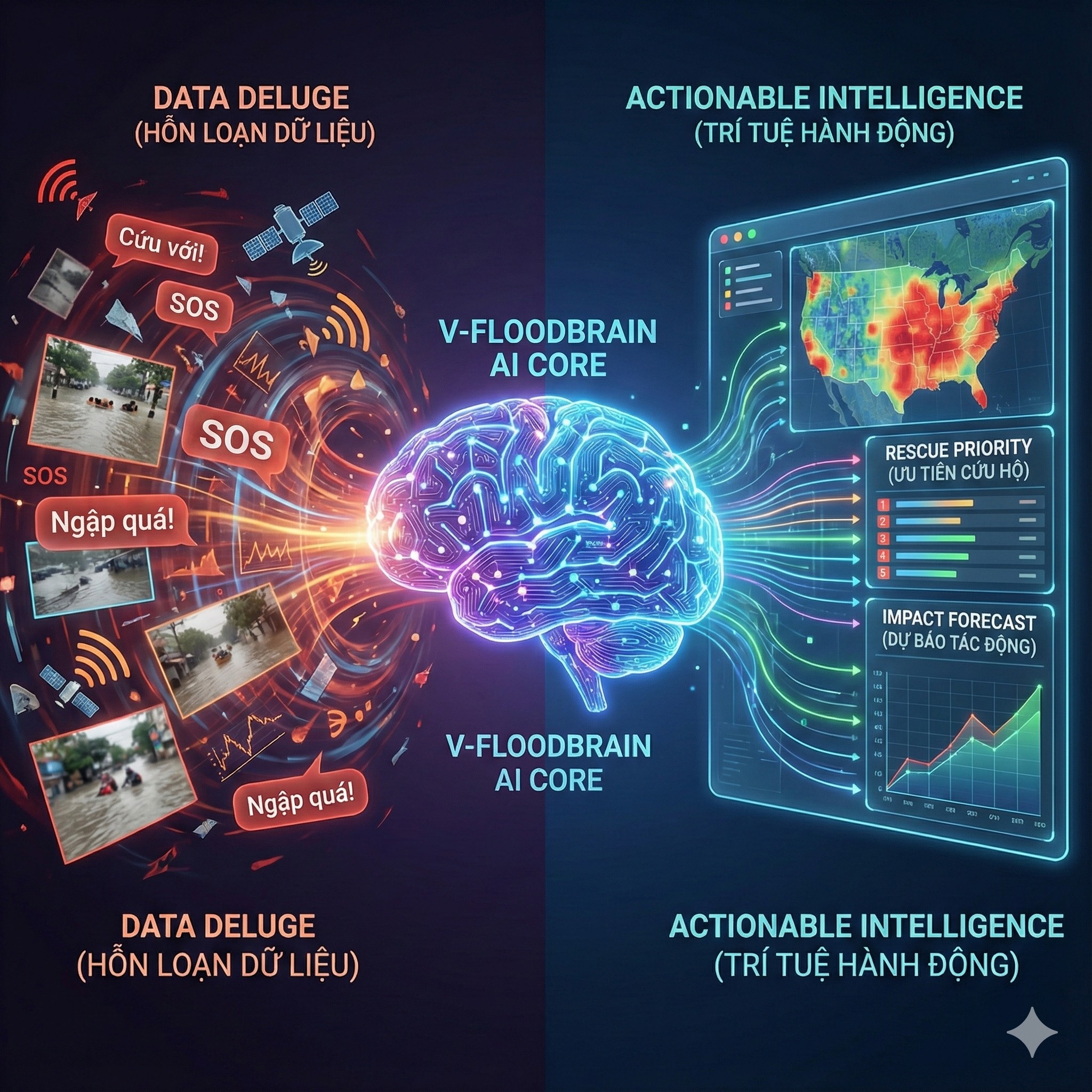

- The Problem arising from Episode 2: Once the Mesh Network (V-FloodNet) is activated, command centers will face a “Data Deluge” of unstructured information from thousands of SOS messages, photos, and sensors.

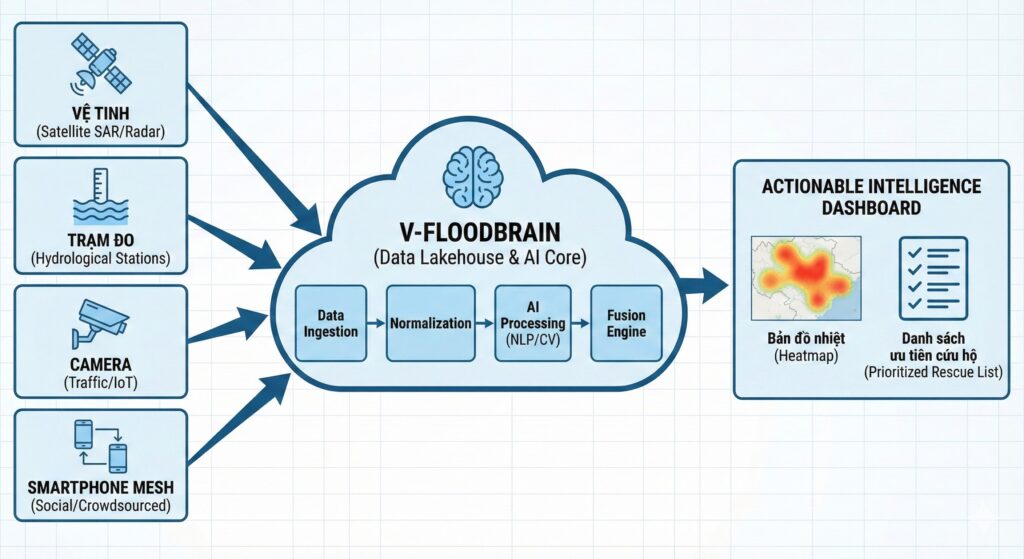

- The Architectural Solution: Constructing V-FloodBrain – a central digital nervous system utilizing a Data Fusion strategy to synthesize four disparate information sources (Satellite, Hydro Stations, IoT, Social/Mesh).

- The AI Engine:

- Deploying NLP (e.g., Transformer/PhoBERT) and Active Learning mechanisms to automatically classify urgency and extract entities (addresses, needs) from raw text.

- Utilizing Computer Vision to convert street cameras and citizen photos into “virtual water level sensors.”

- The Output: Shifting from traditional meteorological forecasting to real-time Impact-based Forecasting (Nowcasting), providing street-level actionable intelligence for rescue forces.

1. Introduction: When Connectivity Becomes a Burden

In Episode 2: Decentralized Infrastructure, we successfully designed V-FloodNet – a resilient mesh network capable of maintaining baseline lifeline connectivity even when national telecommunications infrastructure collapses. We built the “pipes.”

But a new, equally critical problem immediately emerges. Imagine the scenario: A typhoon makes landfall, water rises rapidly at night. The Mesh network activates, and within the first hour, 50,000 SOS messages flood the command center.

- “Help, alley 68 Cau Giay is flooded.” (Missing context: How deep? Are there vulnerable people?)

- “My house is isolated, someone is severely injured, need medical aid ASAP!” (Critical urgency, but ambiguous location).

- Thousands of dark, blurry photos of flooding are attached.

If relying on Manual Processing, duty teams will be instantly overwhelmed. The latency in reading, verifying, and triaging messages leads to potentially fatal decision-making delays. We have connectivity, but we are still informationally “blind.”

Episode 3 dives into the Logical Layer: How do we build a “Digital Brain” (V-FloodBrain) capable of automatically filtering noise, understanding semantics, and converting raw unstructured data into executable commands?

2. V-FloodBrain Architecture: The Data Fusion Strategy

The core problem with disaster data today is not scarcity, but fragmentation and heterogeneity. We possess “four eyes” looking at the disaster, but each eye looks in a different direction and speaks a different language.

2.1. The “4-Eyes” Paradox

| Data Source (The Eye) | Technical Characteristics | Advantages | Fatal Flaw |

| 1. The Macro Eye (Satellite SAR, Weather Radar) | Macro-scale, Remote Sensing | Wide national/regional coverage. Sees through clouds (SAR). | Low Temporal Resolution. Satellite imagery often has a 6-12h latency. Useless for immediate emergency response. |

| 2. The Official Eye (Hydrological Stations) | Precise Point Data, Structured | Extremely high accuracy (Gold Standard). Structured data, easy to process. | Sparse Spatial Coverage. A river gauge does not represent flooding conditions in an alleyway 5km away. |

| 3. The Urban Eye (Traffic Cameras, IoT Sensors) | Visual/Sensor Data, Localized | Real-time visual confirmation at urban hotspots. | Localized and Vulnerable. Dependent on the power grid. Cameras often blur during heavy rain or become useless at night. |

| 4. The Citizen Eye (Social Media, Mesh Network) | Crowdsourced, Unstructured text/image | Real-time, hyper-local coverage. Present in every corner. | Extremely High Noise Ratio. Spam, fake news, duplicate reports. Difficult-to-process unstructured data. |

Engineering Insight: “An effective system cannot rely on a single source. It must be Fusion. V-FloodBrain is a Data Lakehouse architecture designed to ingest all these streams, normalize them, and feed them into AI processing cores.”

3. Deep Dive: The AI Engine – Processing Unstructured Data

The biggest challenge lies in data source #4: The Citizen Eye. It is the richest but “dirtiest” data source. To mine it, we need two technological spearheads: NLP (for text) and Computer Vision (for imagery).

3.1. NLP Pipeline: Understanding Vietnamese Distress Signals

Processing SOS messages during a flood is vastly different from Sentiment Analysis in e-commerce. It demands extremely high accuracy in a noisy, time-pressured environment.

A. Linguistic Challenges:

- Semantics and Urgency: The phrase “water is rising” might be a general update, but “water is up to my neck, help” is maximum urgency. AI must discern this nuance.

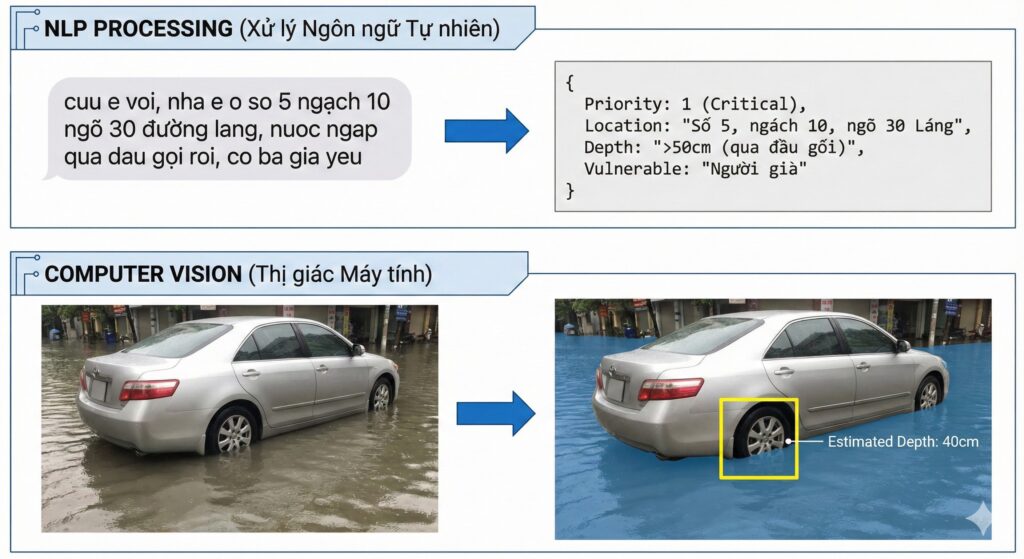

- No Diacritics and Abbreviations: In panic, people often type without Vietnamese diacritics and use shortcuts (e.g., “ngap qua roi, cuu e o 58 ng chi thanh” instead of full sentences).

- Hyper-local Toponymy: Colloquial place names (e.g., “Ong Bay sluice gate”) do not exist on Google Maps.

B. Technical Solution: Transformers and Active Learning

Instead of rudimentary keyword-based models, V-FloodBrain needs to deploy advanced Transformer models, specifically pre-trained variants optimized for Vietnamese like PhoBERT or ViBERT.

- Step 1: Intent Classification (Urgency Triage):The model classifies messages into priority groups:

- Priority 1 (Critical): Life-threatening (elderly, children, medical needs, rapidly rising water).

- Priority 2 (High): Evacuation needed, lack of food/clean water.

- Priority 3 (Info): Situational reporting, no immediate rescue needed.

- Step 2: Named Entity Recognition (NER):The model automatically extracts critical information fields to populate a structured database:[Precise Address], [Number of People], [Contact Phone Number], [Need Type].

- Step 3: Real-time Active Learning:This is key. Before a storm, we lack perfect labeled training data for that specific event.The system operates on a “Human-in-the-loop” model:

- AI confidently processes 80% of “easy” messages.

- 20% ambiguous/difficult messages are routed to Digital Volunteers for manual labeling.

- This newly labeled data is immediately used to Retrain the model every few hours, making the AI smarter rapidly during the unfolding disaster.

3.2. Computer Vision: Turning Every Photo into a Sensor

Thousands of flood photos sent back contain valuable data regarding actual water levels, but machines don’t inherently understand them.

Solution: Semantic Segmentation & Reference Objects

We don’t need face recognition; we need water level recognition. Computer Vision models are trained to:

- Segmentation: Separate image regions into water, land, and objects.

- Identify Reference Objects: Recognize objects with standardized sizes in the image, such as: car wheels, traffic signs, utility poles, sidewalks.

- Depth Estimation: Calculate relative flood depth based on how much of the reference object is submerged (e.g., water covering half a sedan tire ~= 30cm depth).

Practical Application: “When a citizen sends a photo of a flooded street, the AI automatically analyzes it and reports to the center: ‘Location X, estimated depth 45cm, low-chassis vehicles cannot access.’ This ensures the correct deployment of rescue assets (motorboats instead of trucks).”

4. The Output: From Meteorological to Impact-based Forecasting (Nowcasting)

The ultimate goal of V-FloodBrain is not just to react to what has happened, but to forecast what is about to happen in hyper-local scenarios.

Current meteorological systems tell us: “Cau Giay district will receive 100mm of rain in the next 3 hours.” This information is too generic for operational response.

By combining real-time data from the Mesh network (how much is it raining right here), 3D city terrain data, and historical flooding data, AI can run high-speed Hydraulic Surrogate Models to provide Impact-based Nowcasting:

“Alert: With current rainfall intensity, Thai Ha Street segment from Alley 1 to Alley 5 will reach a flood depth of 60cm within the next 45 minutes. Immediate vehicle evacuation required.”

This is a shift from reporting abstract meteorological numbers to providing specific actionable warnings, minimizing loss of life and property.

5. Conclusion & Bridge to Final Episode

V-FloodBrain is the missing piece that turns raw data into actionable intelligence. It connects the courage of citizens on the ground (providing data) with the command capabilities of authorities.

However, such a powerful AI system requires immense feedstock. It needs access to real-time traffic camera feeds, high-resolution digital maps, and government rainfall gauge data.

The engineering problems are solved. Now we face the biggest barrier: Mechanism and Policy. How do we break down the “Data Silos” between government ministries and between the state and the civic tech community?

COMING UP IN THE FINAL EPISODE: DATA POLICY & THE CALL TO ACTION.

We will discuss the “Government as a Platform” model, propose Open Data APIs for disaster scenarios, and define the specific roles the Civic Tech community can play right now.

SERIES NAVIGATION

- Missed why we need a new network architecture? Review 🔙 [Episode 1: System Failure Analysis].

- Want to understand the engineering behind connectivity without power? Review 🔙 [Episode 2: Decentralized Infrastructure (Mesh Network)].

Leave a Reply

You must be logged in to post a comment.